Artificial intelligence (AI) is no longer just a tool for positive advancements; its capabilities are increasingly exploited by malicious actors. This shift has made cyberattacks more accessible than ever. Individuals no longer need to be expert hackers with extensive programming knowledge to launch harmful campaigns. A basic understanding of social engineering and access to a few neural network services can suffice.

Cybercriminals frequently employ publicly available open-source tools and legitimate security testing frameworks, often supplemented by ready-made utilities sourced from the dark web. According to the Cybersecurity and Infrastructure Security Agency (CISA), common tools in these incidents include Metasploit, PowerShell frameworks, and remote management tools that automate exploitation and maintain access.

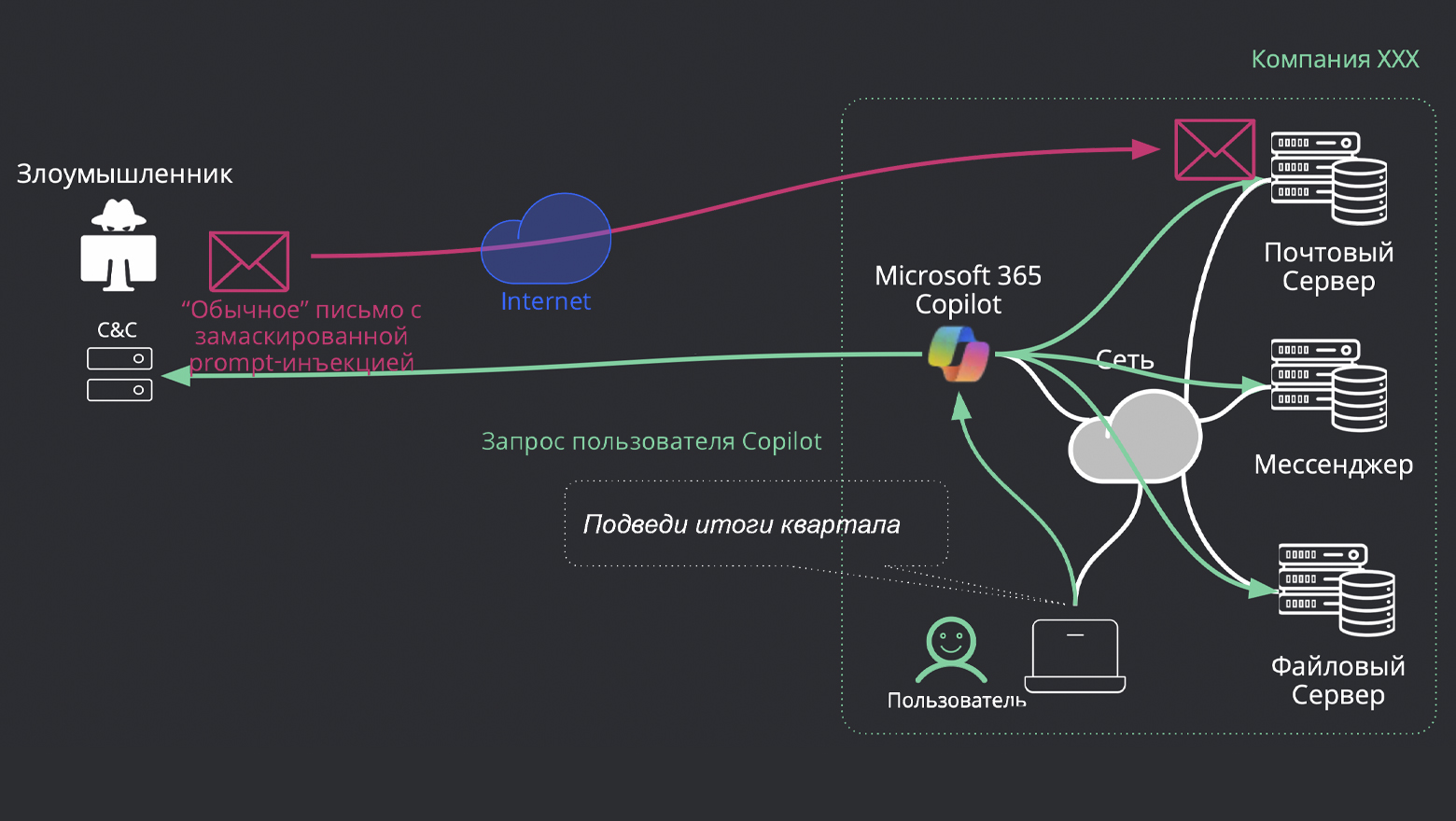

Phishing, a classic form of cybercrime, remains a prevalent threat. This tactic involves deceiving users into revealing sensitive information such as passwords, bank card details, and other personal data through deceptive emails, websites, messages, or phone calls disguised as trustworthy sources. The goal is to steal money, gain access to accounts, or facilitate further attacks.

However, phishing has evolved. With the aid of generative models, it has become more sophisticated and personalized. Cybercriminals now use AI to tailor messages based on publicly available information from social media and corporate resources, adapting communications to fit the role and context of the target. Unlike previous phishing attempts marked by poor grammar and generic threats, modern AI-generated messages mimic corporate language accurately. Moreover, phishing attacks have expanded beyond emails to include messaging apps and corporate chats, often featuring fake websites, voice calls, and even video meetings enhanced with deepfake technology.

The quality of these phishing attacks has drastically improved, leading to higher success rates. For instance, while traditional phishing might have a click-through rate (CTR) of around 12%, AI-generated phishing can achieve as high as 54%. This shift not only enhances the quality of the attacks but also their scale, allowing for mass automation and the generation of numerous deceptive posts, comments, tweets, and articles that create a false sense of public opinion and influence reputations and stock prices.

Targeted misinformation campaigns pose a significant risk as well, where AI models adapt content for specific user groups based on their language, style, and interests. Such campaigns can create tailored pressure on audiences, for example, disseminating false leaks of source codes supported by fabricated evidence.

Deepfake technology, which generates realistic audio and video material that imitates real individuals, has surged in prevalence. The number of deepfake videos and audio files is expected to rise dramatically, from about 500,000 in 2023 to approximately 8 million by 2025. Disturbingly, human recognition of these deepfakes is only successful about 24-25% of the time.

One alarming application of deepfake technology is in impersonating voices, known as deepvoice, which can produce fake audio messages that sound convincingly like a relative or colleague. This technology has made it easier for criminals to orchestrate scams, with studies revealing that 40% of people would likely respond positively to a voice they believe belongs to a loved one.

In early 2024, a coordinated attack using deepfake video and synthesized voice resulted in a significant financial loss for an employee of the British engineering company Arup, who was tricked into transferring approximately $25 million to criminals. Such incidents highlight the growing financial threats posed by deepfake technologies and underscore the urgent need for enhanced protective measures.

As deepfake technology continues to evolve, it is now being used to create fake messages from celebrities and public figures, manipulating public opinion and inciting panic. The rapid generation of these materials makes detection nearly impossible without specialized tools.

The market implications of these trends are profound. Businesses must adapt quickly to these evolving threats, implementing advanced detection systems that analyze user behavior and contextual messaging. As AI continues to advance, competitors in cybersecurity must innovate rapidly to safeguard against these increasingly sophisticated attacks.

Informational material. 18+.