As the field of AI security continues to evolve, organizations face significant challenges in protecting their machine learning models and AI agents. Boris Matsakov, a Data Science engineer at Cloud.ru, highlights that there is currently no unified standard for securing AI systems, which complicates the implementation of effective security measures.

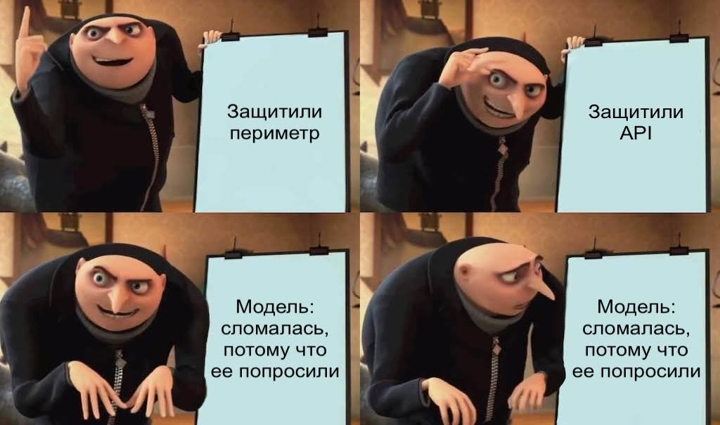

Different teams approach AI security in varying ways, often relying on traditional DevSecOps methods. However, these methods primarily protect perimeter boundaries, dependencies, infrastructure, and access controls. The specific risks associated with AI models, such as vulnerabilities in data and operational logic, are not adequately addressed by these conventional strategies. Consequently, it is essential to develop a distinct security framework tailored to AI agents that extends beyond basic DevSecOps practices.

In the current landscape, frameworks, typologies of attacks, and practical recommendations are emerging, but a cohesive standard is still lacking. Yet, there are foundational guidelines available, such as the OWASP Top 10 for large language model applications and a separate Top 10 for agentic applications, as well as the SAIF risk map and the MITRE ATLAS database of attack techniques.

The inadequacies of traditional cybersecurity measures become evident when considering the unique vulnerabilities of AI models. Unlike typical IT systems, where breaches occur through code or configuration weaknesses, AI-specific attacks target the data input and the underlying logic of the model itself. This means that a model may be secure at the API and infrastructure level but still produce harmful outcomes due to malicious input that appears legitimate.

For instance, an AI model trained on data from a compromised source might generate inaccurate or biased results, leading to reputational harm or even infrastructure threats if the AI agent interacts with databases or CI/CD pipelines. This has led to the emergence of specialized solutions known as AI firewalls, which inspect data entering and exiting LLM endpoints to identify prompt injections, jailbreaks, and sensitive data leaks.

The complexity of AI vulnerabilities is further amplified by the nature of AI models, which consider all input as part of the task. For instance, in systems using retrieval-augmented generation (RAG), malicious directives can be embedded within seemingly harmless documents, which the model then interprets as valid context. This indirect prompt injection represents a significant risk, as it can mislead the model into executing incorrect or harmful decisions.

Moreover, AI agents with memory capabilities present additional vulnerabilities. If an attacker gains access to the model's memory, they might manipulate the AI's responses over time, skewing financial reports to present an overly positive outlook. Even the best-trained models can perpetuate harmful patterns if the training data itself is compromised, as seen in data poisoning attacks where fraudulent transactions are mislabeled as legitimate.

To effectively secure AI models and agents, organizations need to focus on how models receive, store, and utilize information. While there is a growing awareness of the risks associated with AI and LLMs, the lack of a unified standard means that best practices are still being developed. Key security measures should include strict data provenance checks and robust training pipeline controls to mitigate data poisoning risks.

As the market adapts to these challenges, companies investing in AI security frameworks will likely gain a competitive edge over those that rely solely on traditional cybersecurity measures. The establishment of standardized practices will not only enhance the security of AI systems but also foster trust among users and stakeholders.

Informational material. 18+.