On March 12, 2026, concerns over the implications of artificial intelligence (AI) reached new heights as noted researcher Mrinank Sharma, former head of AI safety at Anthropic, stepped down, citing dangers posed by unfettered AI developments. Sharma's exit from the company, which focuses on creating safe and interpretable AI systems, underscores the urgent need for a balance between technological advancement and ethical considerations. He expressed in his farewell letter that humanity's wisdom must grow commensurate with its influence, or dire consequences may follow.

The ongoing debate about AI's potential threats is heightened by recent developments in the field. In February, Zoë Hitzig of OpenAI resigned over ethical concerns, echoing Sharma’s warning about the existential risks AI poses. The increasing sophistication of AI tools raises alarm as malicious actors could exploit these systems for nefarious purposes, such as cyberattacks or other harmful activities, rather than using them for the greater good.

In light of recent advancements, Anthropic's new model Claude Opus 4.6 has discovered over 500 previously unseen security vulnerabilities, casting a spotlight on the need for rigorous security measures in software development. While this AI model can assist in enhancing code security, it simultaneously poses new risks if misused.

Industry expert Paul Ford highlighted a significant shift in perceptions about AI-driven software development. Ford noted a remarkable improvement in Anthropic's AI capabilities, which have transformed from merely a helpful assistant into a powerful tool capable of expediting complex software projects. He remarked that tasks that would typically cost companies hundreds of thousands of dollars could now be accomplished with relative ease and minimal cost, as AI-generated code, despite its limitations, can meet necessary deadlines.

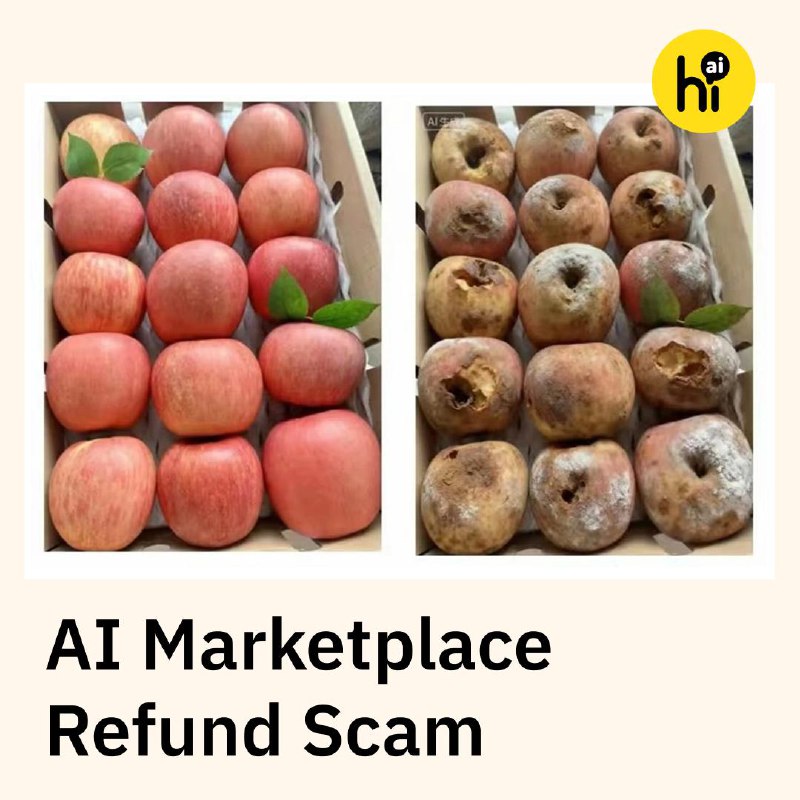

The current landscape poses a pivotal question for the traditional software market: Could the arrival of efficient yet less complex software disrupt established practices? If a surge of straightforward AI-created software floods the market, traditional software practices may become obsolete, drastically altering operational dynamics for corporate security teams.

As the industry grapples with these changes, the ongoing evolution of AI represents both a significant opportunity and a formidable challenge, compelling competitors to innovate responsibly while managing the associated risks. The future of software development undoubtedly hinges on finding a balance between efficiency and security in this rapidly advancing era of artificial intelligence.

Informational material. 18+.