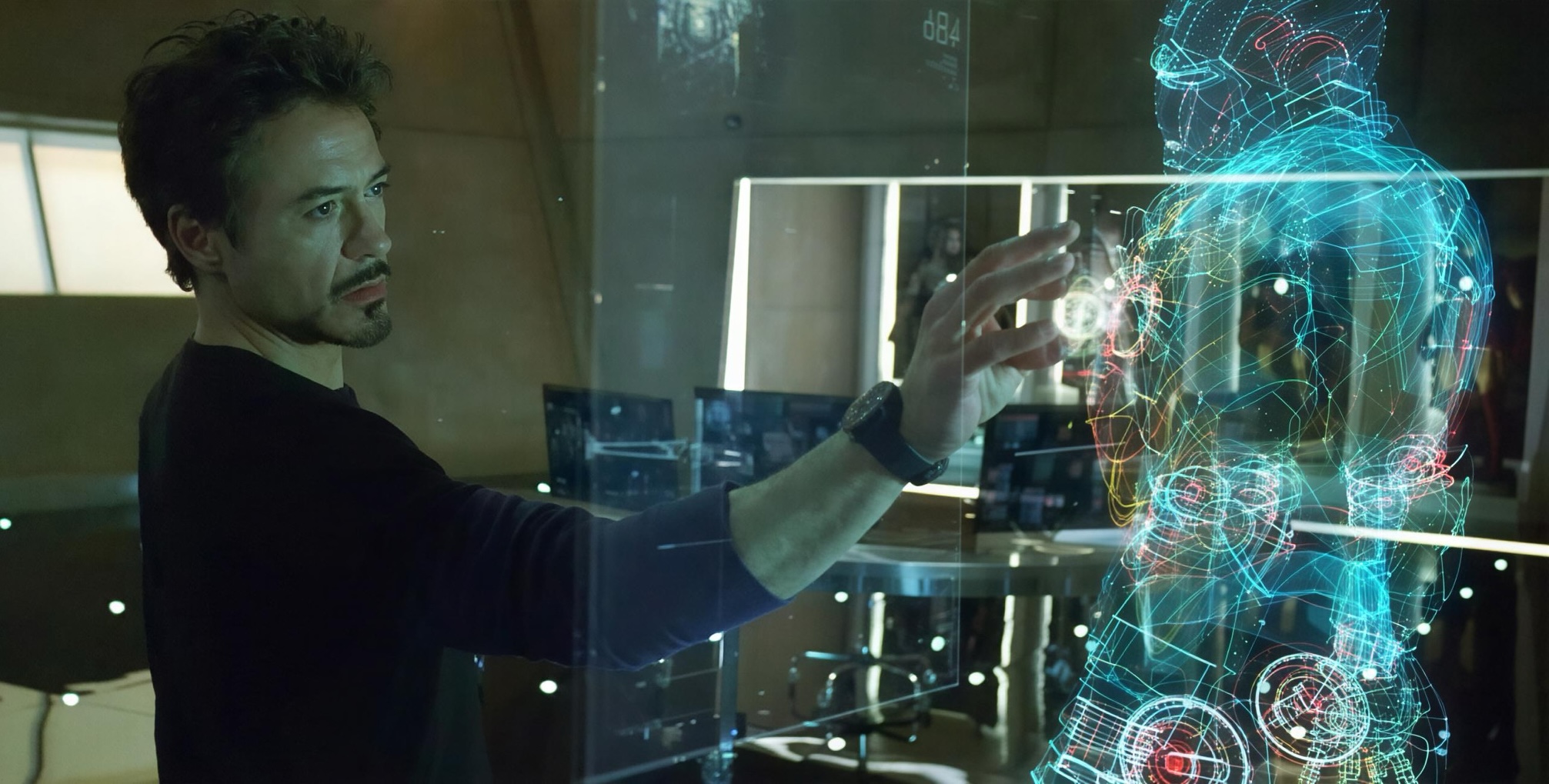

OpenAI's ChatGPT Health, launched in 2026, has sparked controversy following its recommendations for treating medical conditions. In a recent case, the AI suggested using plantain as a remedy instead of performing critical life-saving procedures, raising alarms among healthcare professionals. The incident occurred at Mount Sinai Hospital, where ChatGPT Health reportedly made erroneous suggestions for 60 out of 21 patient cases. Critics argue that relying on artificial intelligence for medical guidance could lead to dangerous outcomes. Experts emphasize the need for caution when integrating AI into healthcare, particularly in situations where human intervention is vital. As discussions around AI's role in medicine continue, this incident underscores the importance of human oversight in diagnosing and treating patients. The implications for the healthcare market are significant, as hospitals and providers must carefully consider how to implement AI technologies while ensuring patient safety. Competitors in the health tech space may also need to reevaluate their AI strategies to avoid similar pitfalls.

Informational material. 18+.